We focus on what you really need to move the needle – without the empty buzz. Do you think that sounds good?

We do.

.jpg)

Artificial Intelligence

People & Culture

Five practical initiatives to support agentic AI skills at Vincit

Commerce & engagement

Artificial Intelligence

Beyond the chatbot: how to boost retail ROI with agentic AI

Software Development

Artificial Intelligence

The hidden cost of speed: when the bill for AI debt comes due

Commerce & engagement

Artificial Intelligence

What agentic AI in commerce looks like in practice – a concrete use case

ecommerce

Software Development

How AI agents are changing the way we build digital solutions

Commerce & engagement

Artificial Intelligence

Why agentic AI in commerce is a big opportunity for B2B sellers

Processes & platforms

SAP

3 ways that SAP BTP can help your organization succeed

Technology

Data & Analytics

How Nordic manufacturers are using AI to create better business outcomes

.jpg)

People & Culture

Our candidate-friendly recruitment process at Vincit

Technology

Cloud Services

5 key takeaways from the Grow with Vincit customer event

Commerce & engagement

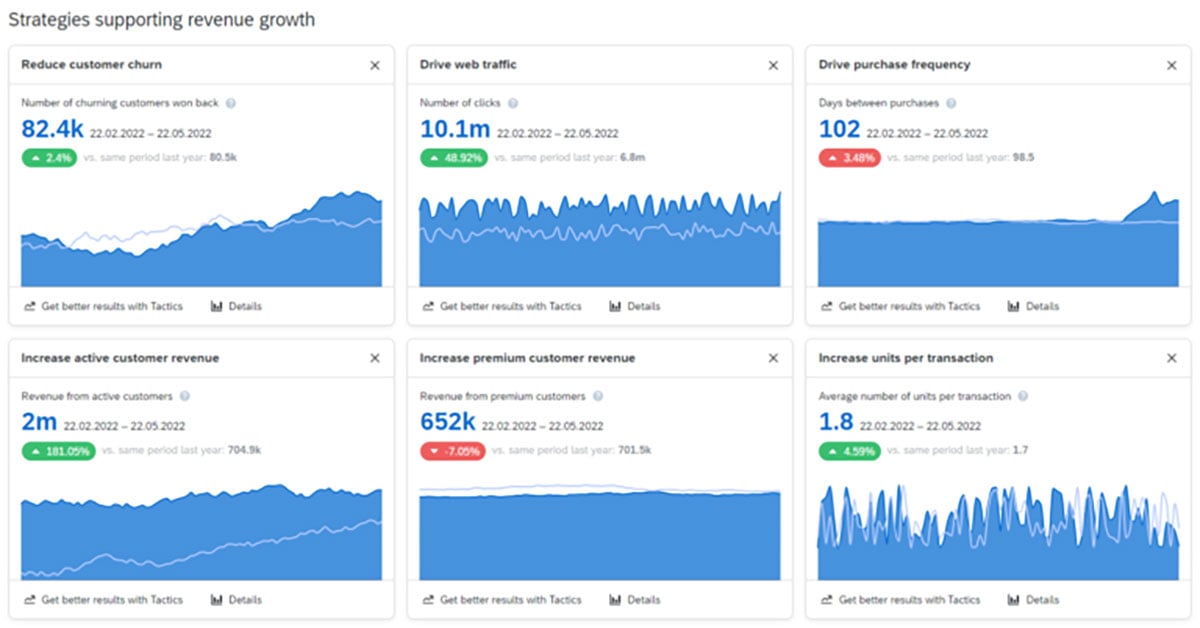

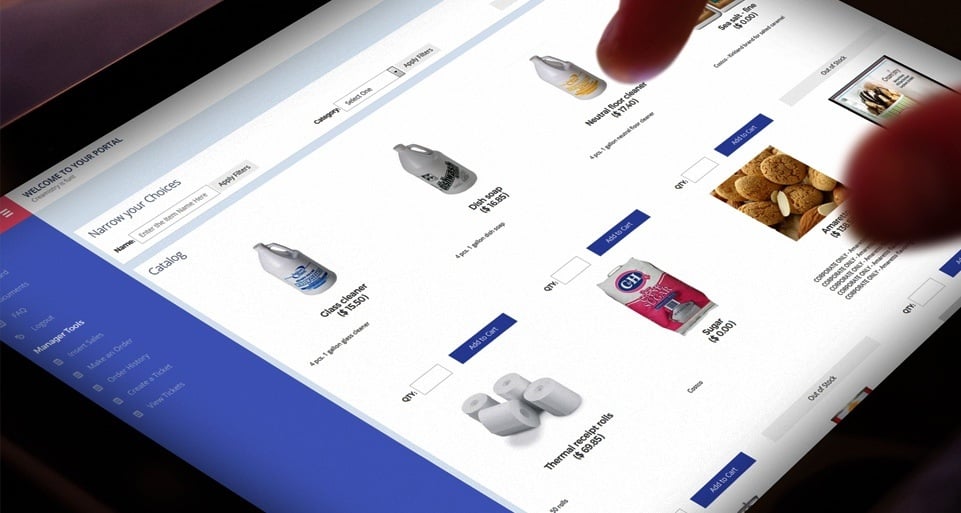

Improving the operational efficiency of your ecommerce setup

Artificial Intelligence

Data-driven operations

“Would you like to call a human to chat?” – what can GenAI software actually bring to customer service?

Commerce & engagement

Downloadable

Guide: How data can power your omnichannel customer experience

Web Development

Accessibility

Practical tips to proactively manage the accessibility debt

ecommerce

Commerce & engagement

Five B2B ecommerce trends that your company can take advantage of

Commerce & engagement

The 4 business issues that drive the need for B2B commerce investments

People & Culture

Better Mondays

People & Culture

The first steps to being a better ally in the workplace

Commerce & engagement

Whitepaper: Make your eCommerce recession resilient by going headless

People & Culture

Creating the best place to grow – Univincity 2.0

Processes & platforms

Picking the best service model to keep your solutions running smoothly

Design

Business Processes

Mind the gap – how we get from business vision to a functional and concrete enterprise architecture

Commerce & engagement

The power of customer data – your organization’s most crucial asset

.jpg)

Processes & platforms

SAP

The three kinds of tools for creating useful SAP apps

People & Culture

What does humane leadership mean in practice at Vincit?

Sustainability

Digital platform economy

How do we design sustainable business operations for the future with AI?

Processes & platforms

SAP

Enable your business growth with SAP S/4HANA Cloud and Vincit

Applications

Processes & platforms

Why simplifying business apps is key to a good user experience?

Technology

Strategy

Three Strategic Digital Innovation Initiatives Every Manufacturer Needs

Processes & platforms

SAP

Easily produce SAP apps your business and users will love

Digital platform economy

Why a digital strategy is a crucial guide for your business

Artificial Intelligence

How to help ensure a successful AI project - 3 tips for business leaders

Web Development

Winning experiences & solutions

Accessibility is not accessible. A call to action

Applications

What’s the best technology to use if you want to create a mobile app?

Artificial Intelligence

Using, Not Building

People & Culture

Extraordinary things are created together – what we mean by Vincit’s new value

Winning experiences & solutions

Complexity, simplified

People & Culture

Leadership in a Time of Unprecedented Change

People & Culture

5 Ways to Keep Employees Motivated

People & Culture

Promoting a culture where everyone feels welcome and appreciated as themselves

People & Culture

Prepare not Panic: Managing Anxiety During a Difficult Time

People & Culture

The Future of the Workplace: How to Thrive not just Survive

People & Culture

Strengthening Company Culture in 2021 & Beyond

Vodcast

Artificial Intelligence

Transforming Legal Practices with Generative AI

Web Development

Winning experiences & solutions

Go the extra accessibility mile– detect commonly undetected issues

Commerce & engagement

How to make sure your move into B2B digital commerce is a success

Artificial Intelligence

AI governance – 5 important things to know about the EU AI Act

Webinar

How to start taking advantage of generative AI now – watch the webinar!

Vodcast

Artificial Intelligence

Navigating Recruitment's AI revolution

Artificial Intelligence

Making medicine smarter – 5 ways AI will impact healthcare professions

Artificial Intelligence

6 important things to keep in mind when using generative AI

Commerce & engagement

The three key ways how digital supply chains lead to customer excellence

Artificial Intelligence

Streamlining product development - 8 ways that AI will make an impact

ecommerce

Web Development

Reimagining the ecommerce Industry: Unveiling digital transformation strategies

.jpg)

Artificial Intelligence

Better efficiency, empathy, and quality – 8 ways that AI will change customer service

Artificial Intelligence

Helping CDOs – 7 ways generative AI will speed up digital development

Applications

Commerce & engagement

Guide to E-Commerce App Development: Steps, Key Features and Trends

Artificial Intelligence

Streamlining HR – 9 ways generative AI will impact talent acquisition

Commerce & engagement

5 common mistakes to avoid when making a digital business transformation

Artificial Intelligence

Updating IT support – 7 ways that generative AI will make a big difference

Technology

Commerce & engagement

“New” marketing platform from SAP – what’s interesting in Emarsys?

Design

Consumer brands

Customer Engagement vs. Customer Experience in E-Commerce

Design

Customer Engagement

User Experience vs. Customer Experience: Key Differences

Artificial Intelligence

Here’s 7 easy ways you can start using generative AI at work now

Applications

Design

Cracking the Code of MVP Design: Prioritize UX

Applications

Design

Styling a Superior User Experience on Your App

Winning experiences & solutions

Making marketing more effective – 6 ways how generative AI will make an impact

Wholesale and retail

Winning experiences & solutions

Leveraging AI to Optimize Your E-Commerce Store

Winning experiences & solutions

5 ways that generative AI will change legal work

Digital platform economy

How to Reduce the Dangers of Broken Authentication on Your App

Artificial Intelligence

7 practical ways that AI will impact continuous software development

Commerce & engagement

7 reasons why now is the time to pursue digital business transformation

Digital platform economy

Winning experiences & solutions

Configuring Your App's User Roles for Safety and Success

Artificial Intelligence

Winning experiences & solutions

Nine ways that generative AI will power sales processes in the future

Artificial Intelligence

Winning experiences & solutions

Blog: New to Generative AI? 5 things to do now as a business leader

Wholesale and retail

Winning experiences & solutions

Blog: Guide to Creating an E-commerce Customer Experience (CX)

Commerce & engagement

Wholesale and retail

Blog: All sales have become digital – are you ready to take advantage?

Processes & platforms

SAP

Blog: How SAP Analytics Cloud will take your planning to the next level

.png)

Winning experiences & solutions

Blog: What is Digital Product Development? Steps to Success

Technology

Blog: Guard Your Users Against a Data Breach with Best Practices for AWS S3 Storage

Winning experiences & solutions

Blog: Accelerate your digital transformation by outsourcing software development

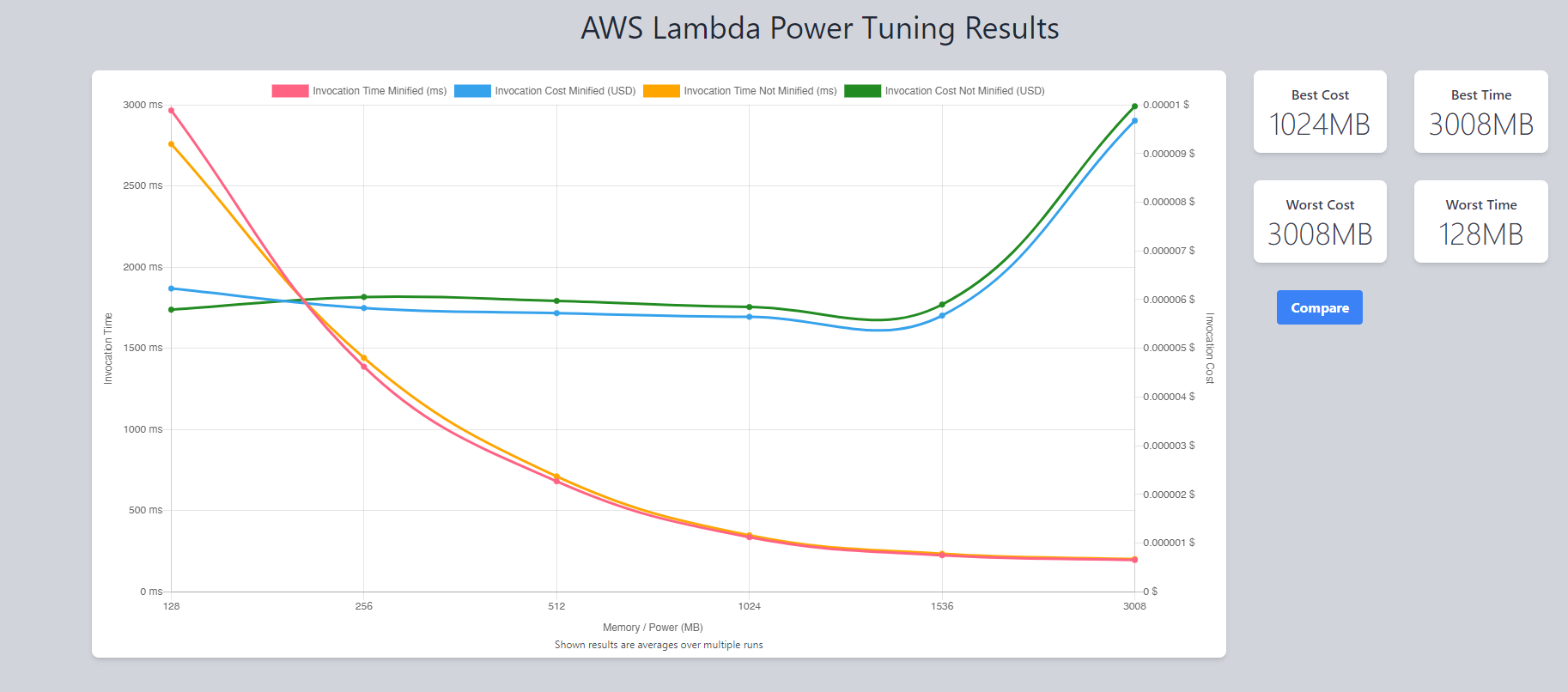

Technology

Software Development

Blog: Optimize Your AWS Lambda Functions for Cost and Performance

Web Development

Accessibility

Blog: Implementing accessibility in a large project – the EVOYA Platform from Revvity

Processes & platforms

SAP

Blog: Five key takeaways from the SAP Build Hackathon 2023

Software Development

How Digital Transformation is Enhancing Customer Experience

-1.png)

Artificial Intelligence

Winning experiences & solutions

Blog: How we use generative AI at Vincit

Technology

Applications

More Radio, Less Static: Building Dynamic Digital Radio Apps

Technology

Software Development

Magento V. 1 EOL and Why It Pays to Build With Composable Software

Technology

Applications

Blog: Will you get in legal trouble for using GitHub Copilot for work?

Artificial Intelligence

Blog: Using ChatGPT for programming

ecommerce

Technology

Delight your Customers During a Recession

Commerce & engagement

Wholesale and retail

Blog: How to Build an Ecommerce Website Step by Step

Design

Blog: How to design the perfect system

Applications

Sustainability

Blog: Four ways to reduce the carbon footprint of digital solutions

Processes & platforms

Blog: Take the lead in your industry with modern business technology

Technology

Commerce & engagement

Blog: Four ways to check if your commerce architecture is future-proof

Processes & platforms

SAP

Blog: SAP can help enable digital transformation

Technology

Applications

Blog: The IBU app wins a Red Dot!

Technology

Design

Blog: Lower the Bar – Starting With Web Accessibility

Commerce & engagement

Blog: Ready To Disrupt the Market by Building Your Own Marketplace?

Technology

Blog: The maintenance of a digital service begins during the design phase – take these three things into account

Technology

Blog: The key elements of sustainable partnership – Vincit x Reactron Technologies

Wholesale and retail

Data-driven operations

Blog: Build true customer value through an e-commerce ecosystem

Technology

Blog: Despite Common Concerns, Headless eCommerce May Be the Easier and More Effective Option

Technology

Blog: Medical segment software development in turbulence

Discover & define

Blog: Digital sales in ecosystems – 3 steps to get you started

Data-driven operations

Blog: Getting the most out of data-driven digital sales

Winning experiences & solutions

Accessibility

Blog: Count me in – Web accessibility impacts all of us

Business Processes

Blog: Summer 2020 Update

Technology

Commerce & engagement

The Ultimate Guide to Headless Commerce - The Future For Digital Sales

Discover & define

Blog: Building tangible competitive advantage in digital sales through sustainability

Strategy

Blog: New tricks for a new decade?

Strategy

Blog: Successful business development looks to potential futures

Digital platform economy

Discover & define

Blog: Reducing carbon footprint in digital services – Case IBU

Strategy

Accessibility

Blog: What will accessibility look like in 2022-2025?

Data-driven operations

Blog: Total digitalization of sales – how to emerge as a winner?

Technology

Blog: Choosing a Mobile Technology in 2022

Design

Blog: What is UX Strategy? And How Can You Create and Transform the Customer Journey?

Strategy

Blog: Leadership Principles for Tomorrow

Commerce & engagement

Blog: What is Headless Commerce? And Everything You Need to Know About Building a Headless Commerce Strategy

Design

Blog: 5 Foundations to Building A Solid UX Strategy for your Brand

Vodcast

Blog: Improving Online Customer Experiences Through Modern Technologies

Technology

Sustainability

Blog: Try a study group to improve your digital accessibility

Discover & define

Blog: 5 reasons successful digital initiatives always consider the human aspect

Design

Blog: How to boost design thinking part 3 – Design critique

Design

Blog: How to boost design thinking part 2: Design communication

Design

Blog: How to boost design thinking part 1– Design, mentoring and creative thinking

Discover & define

Blog: Efficiency and customer-centeredness through a digital supply chain

Technology

Blog: How to Use RxSwift with MVVM Pattern (A Complete Guide)

Strategy

Blog: Digital transformation isn’t about technology but people

Strategy

Blog: 8+1 tips – how can a development team help their product owner succeed?

Strategy

Blog: Vincit has been awarded ISO 13485 medical certification

Technology

Blog: Riding the Wave – How Sixthreezero’s Investment in Digital Experience Drives Growth

Design

Sustainability

Blog: Circular economy sparks new business – where to start?

Technology

Blog: How I got a campsite from a fully booked Grand Canyon campground

Technology

Design

Blog: Are You Prepared for Digital-First Home Buyers and Renters?

Technology

Commerce & engagement

Blog: Shopify Plus vs. Magento 2 Commerce: Which Platform is Best For You?

Technology

Commerce & engagement

Blog: Shopify vs Shopify Plus | The 9 Key Differences Between the 2 Platforms

Technology

Strategy

Blog: How to start developing information security management in a human-centered way?

Technology

Blog: How Smiles Kept Our Office Safe

Technology

Blog: Technology Spotlight: Chakra UI

Technology

Blog: How to properly build a cloud service

Vodcast

Blog: Driving Dealership Revenue Through Digital Marketing Transformation

Technology

Strategy

Blog: Human-centered information security management

Strategy

Design

Blog: 5 ways in which accessibility will improve your business

Wholesale and retail

Processes & platforms

Blog: Digital eXperience Platforms – the make of a modern digital customer experience

Technology

Blog: Mobile App Maintenance – How to Keep Users Happy

Strategy

Design

Blog: Agile and design – together at last

Vodcast

Blog: Driving Customer Experience with VR/AR Technology

Technology

Design

Blog: Top ten questions from digital design

Technology

Winning experiences & solutions

Blog: Healthcare Mobile Application Development Cycle

Vodcast

Blog: Designing and Developing Digital Accessibility

Winning experiences & solutions

Blog: Designing health and welfare apps is a precise business

Technology

Blog: What Makes Healthcare Application Development So Complicated?

Commerce & engagement

Wholesale and retail

Blog: What Is the Cost of Shopify Plus and Fees?

%20is%20about%20people.jpg)

Vodcast

Blog: My eCommerce Adventure: a Fireside Chat with SixThreeZero CEO

Technology

Blog: What is Shopify Plus?

Technology

Blog: Why Must a Healthcare App be HIPAA Compliant?

Technology

Blog: Generating Code From Your GraphQL Schemas

Vodcast

Blog: Career Hunting in Tech

Technology

Blog: Utilizing PubNub for Real Time Member Communication

Data-driven operations

Blog: Three things to consider when acquiring data services

Technology

Design

Blog: Four ways to enable mobile payments in apps

Technology

Blog: What Are the Top Health App Trends of 2020?

Technology

Blog: When should you use React Native?

Technology

Blog: How to Design & Develop a Mobile Health Application

Technology

Blog: 10 Essential Integrations for Your Ecommerce Website

Technology

Blog: A Mobile App Marketing Checklist

Vodcast

How to Implement Artificial Intelligence (AI) in JavaScript?

Artificial Intelligence

Blog: Data analytics, artificial intelligence, and machine learning – buzz words simplified

Technology

Blog: Fix Custom Font Inconsistency in React Native

Technology

Blog: Balancing All User Requirements During Healthcare Application Development

Technology

Commerce & engagement

Blog: Should you update your ecommerce amid a culture shock?

Technology

Blog: Considerations for Mobile Health App Development

Strategy

Blog: How to organize a one-hour retrospective for 30 people?

Business Processes

Blog: How to Start Remote Work at Your Company

Winning experiences & solutions

Blog: How to tell if your Application Needs an Overhaul or Small Upgrade

Design

Accessibility

Blog: Why you should consider accessibility in your development

Vodcast

Blog: Kelley Blue Book's Journey to Microservices

Technology

Blog: Getting Started with AWS DevOps using AWS Amplify

Technology

Blog: The World (Wide Web) is Mobile, Are You?

Technology

Business Processes

Blog: What a Successful Software Development Relationship Looks Like

Technology

Business Processes

Blog: How to Select Your Software Development Agency

Vodcast

Blog: Duck Tapes Transcript: Rematch and Dimension 7

Technology

Blog: Trying out SwiftUI

Technology

Blog: Experimenting with Style Transfer using PyTorch

Vodcast

Blog: Duck Tapes Transcript: Native Modules and Blockchain with Adem Bilican

Technology

Blog: Introduction to Kubernetes: Part 2

Design

Blog: The Dawn of Planet Centric Design

Technology

Blog: Introduction to Kubernetes: Part 1

Vodcast

Duck Tapes Transcript: Code School life with Alex Aranda

Technology

Blog: Agile Development in a Nutshell

Vodcast

Blog: Duck Tapes Transcript: James Snell's NodeJS OC Talk

.avif)

Design

Blog: Is the Tesla Cybertruck ugly or beautiful?

Vodcast

Blog: Duck Tapes Transcript: React Native with Gant Laborde

Technology

Things you didn't know about Fibonacci

Technology

Blog: Why and How to Outsource Software Development

Design

Blog: Designing for an age of transition

Technology

Blog: Mobile app benefits for business – Do we need an app just because our competitors got one?

Technology

OAuth 2.0 and OIDC: what should I know about tokens?

Strategy

Blog: Five reasons to utilize analytics in your business

Vodcast

Blog: Rachel Valentine Mix!

Strategy

Blog: Six reasons why you want to code your cloud environment

Vodcast

Blog: Jonny Burger Mix!

Vodcast

Blog: Laurence Bradford Mix!

Vodcast

Blog: William Candillon Mix! - React Native

Technology

Blog: The New Features in React DevTools 4

Vodcast

Blog: Shawn Wang Mix! (aka SWYX)

Vodcast

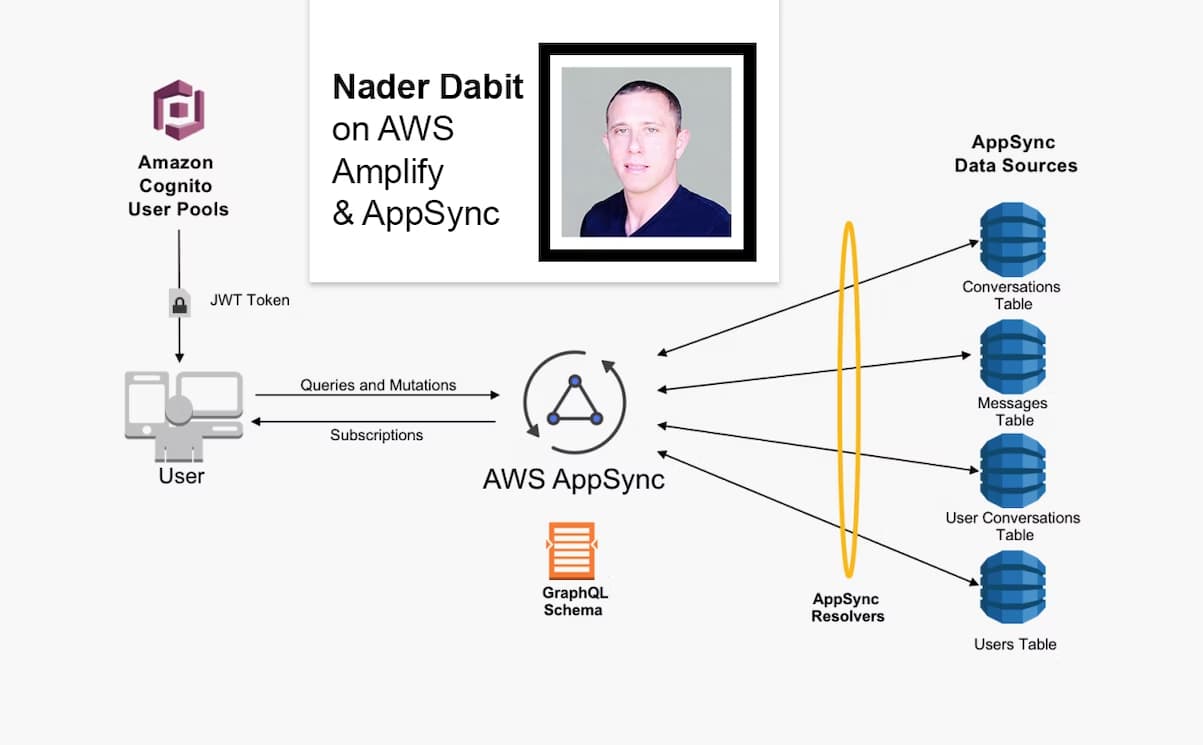

Blog: Nader Dabit Mix!

Vodcast

Blog: The John and Tiffany Mix!

Vodcast

Blog: Eemeli's Open Source Mix!

Vodcast

Blog: React Native Mix Vol.1!

Design

What is User Experience (UX) and Why is it Important?

Vodcast

Blog: Kent C. Dodds Mix!

Vodcast

Blog: Wes Bos Mix!

Design

Blog: A different kind of UX team

Vodcast

The C-Life Podcast - Ville Houttu

Technology

Blog: Getting Started With Storybook 5.0 for React

Technology

Blog: An Introduction to React Hooks

Technology

Blog: Overview of Mobile Development Frameworks in 2019 | Native, React Native, Flutter and Others

Technology

Blog: Kickstart your GraphQL API with Hasura

Technology